Internal Medicine Exam turns out too difficult for ChatGPT

For the time being, artificial intelligence will not treat medical patients. ChatGPT, based on AI, failed the exam on internal medicine. It did not do well in tasks related to medical area either, which was tested and reported by scientists from Collegium Medicum of Nicolaus Copernicus University in Toruń.

Chat GPT is an advanced language model based on artificial intelligence, which generates answers to provided questions. It was created by an American research laboratory OpenAI using large data base so that it could run a conversation and engage in various topics, from general talks to certain areas of knowledge. artificial intelligence has been used more and more widely in medicine, for example in diagnosing cancerous lesions, orthopedic fractures, assessment of formulations in pathomorphology, as well as designing medicines. The research has shown that the algorithm is more sensitive than the human eye and that it may help medics in patient care, but that it will not replace human medical doctors. At least it will not do so in the nearest future. – While talking about medical conditions with friends or doctors, we often hear that someone has googled their symptoms and made a diagnosis themselves – says Dr. Szymon Suwała from the Chair of Endocrinology and Diabetology of Medical Faculty of Collegium Medicum, NCU. – Now, these possibilities are broadening, as you may have a talk with ChatGPT of Gemini on your sicknesses. Both chats are based on data in Google or other browse engines, therefore let us not get cheated that their diagnosis is better.

Andrzej Romański

There are already chats where artificial intelligence uses data from other sources, for instance PubMed or an English Internet browser comprising articles on medicine or biological sciences. It may seem that n such a case, the information will be more precise. Yet, the research has shown that artificial intelligence based on medical literature was not able to pass exams with which Chat GPT did fine only to some extent. – Patients will continue using Google, Facebook or other types of chats as they are interested in their health condition, and the Internet is now omnipresent and available – says Dr. Suwała. – Still, I always encourage to search information from medical specialists as they are more competent than artificial intelligence.

Failed examination

In order to prove it, scientists from Collegium Medicum checked how artificial intelligence would do in the examination on Interna, which is the colloquial name for internal medicine, an area of medicine specializing in internal diseases. It is considered “the Queen of medicine". ChatGPT has simply failed this examination.

There were only a few smaller areas where the examination score of AI was satisfactory, still it was much lower than that of a human exam taker – says Dr. Suwała.

In this specialization examination, medics must answer 120 questions, where only one out of five answers are correct. In order to become a specialist, a doctor must answer correctly 60% of the questions. If the score is good or higher, the exam taker is exempt from the oral part of the examination. If they perform worse, they must retake it.

The researchers resigned from the oral part of the examination. They asked ChatGPT a whole range of written questions. – We also removed questions which ChatGPT would not be able to answer for technical reasons, which were those containing pictures or analytical elements related to another question – explains Dr. Suwała. – In total, in 10 sessions we asked artificial intelligence 1191 questions. In none of the sessions, did AI proceed to the oral part of the exam, which means it did not score 60%. The correct answers ratio ranged from 47,5 to 53,3%.

The medics analyzed the length of the questions, a difficulty indicator, and it turned out that analogically to human test takers ChatGPT did better in questions considered as simpler, however the correlation was not one to one, compared to human result. AI scored a little higher in easier questions and lower in more complicated ones. There was no correlation between the length of questions and the quality of the answer. – We were dealing with a machine which had been made to process a large number of signs. This was not a human who the longer reads a task the more and more tired becomes with reading and analyzing all its aspects – says Dr. Suwała.

The scientist notices that there were also longer question where ChatGPT got confused with. They were mainly those in which it was necessary to use a specific keyword, which a doctor could use knowing what the point of the question was. A curiosity was also the fact that sometimes artificial intelligence knew the correct answer but marked a wrong one.

Every time, apart from the answer, we got a descriptive explanation why the particular answer was chosen – says Dr. Suwała. – And this is when we noticed that it marked the wrong answer multiple times, and then described the decision process in a way which indicated it knew the correct one. We do not know why it happened this way.

The specialists from Collegium Medicum had the results of their research published in the magazine "Polish Archives of Internal Medicine" in article ChatGPT fails the Polish board certification examination in internal medicine: artificial intelligence still has much to learn.

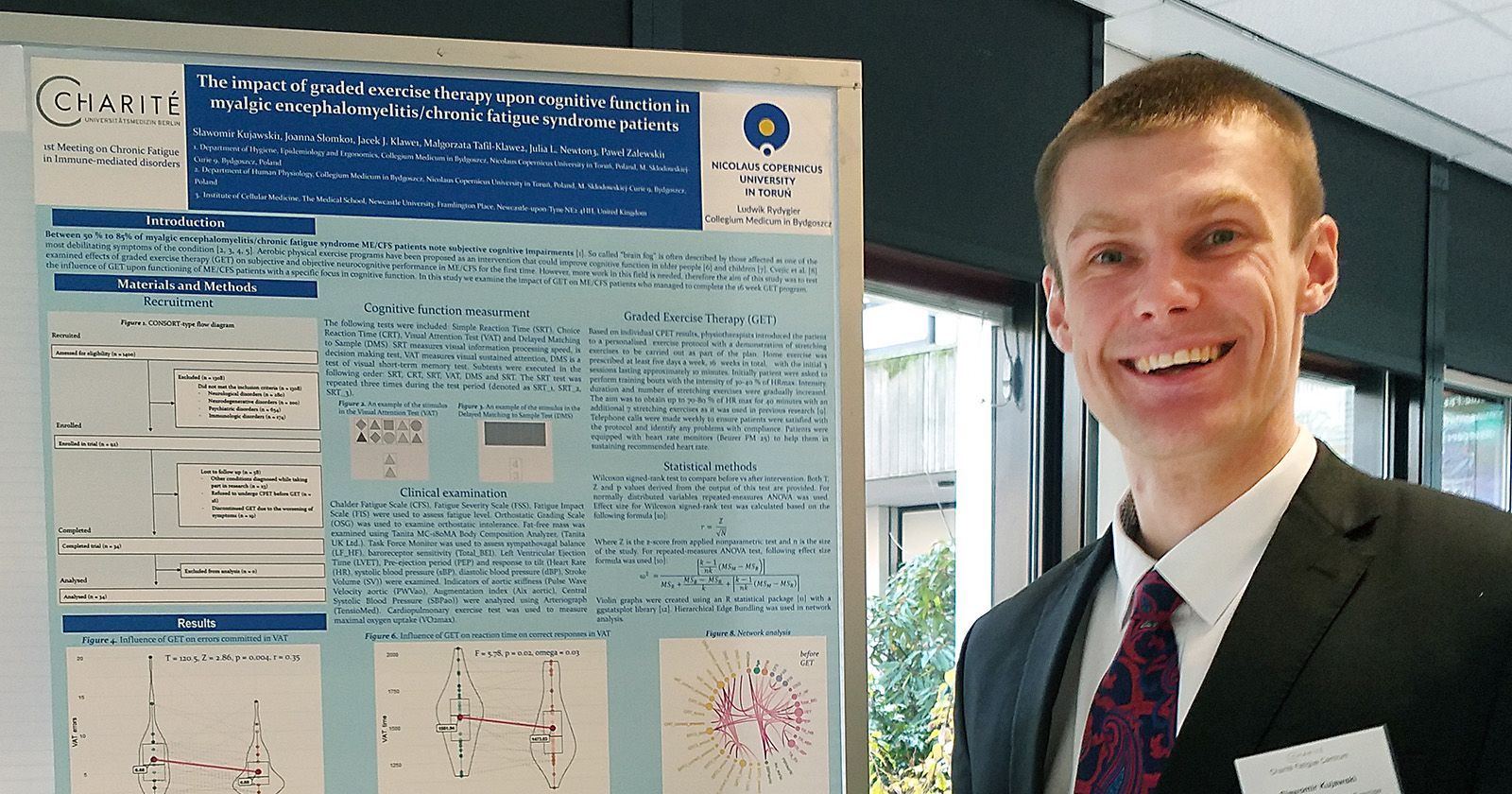

The reason why this research was conducted by the medics from Collegium Medicum NCU was the success of ChatGPT in USMLE (United States Medical Licensing Examination). This is an exam which involves three examination stages and is designed for future medical doctors who want to work as such in USA. A positive result in USMLE is equivalent to receiving a license to perform the profession of a medical doctor in the United States of America.

In Poland, students who graduate from a medical university receive a Doctor Diploma, However, in order to receive a full license to perform the job of a medical doctor, during their specialization internship and at the end of the final years of their studies, they must pass Medical Doctor Final Exam. In order to pass this exam, they must score 59% out of 200 questions. LEK (the abbreviated name of the said exam) is thus the most equivalent to USMLE. Similarly to the US, ChatGPT in Poland succeeded in LEK, which was also checked by the researchers from Collegium Medicum. – These questions are simpler, and they comprise rather basic issues, as the doctors answer them right after completing their studies – explains Dr. Suwała.

The literature did not help

The scientists were wondering why systems based on specialist literature performed worse on the exams than generally available ChatGPT. No answer has been found. They suspect it is so because they are based on literature, not on textbooks. It is different for those who create examination questions as for them it is still textbooks that are fundamental. – In addition, scientific articles are written to confirm or question what has already been discovered – explains Dr. Suwała. - And here appears a kind of mishmash which artificial intelligence may not be able to match with. Moreover, ChatGPT is widely promoted and developed, for sure much faster than a niche structure such as artificial intelligence based on the PubMed literature.

Andrzej Romański

According to the scientist from Collegium Medicum of NCU, there is still a long way before the artificial intelligence we know from everyday use such as ChatGPT or Gemini will pass specialization exams. It is unlikely that anyone will deliberately develop it in this direction. However, the one trained for a specific field of medicine using appropriate textbooks will eventually cope with the exam. – Artificial intelligence may pass the exam, but it will not be able to cure patients – says Dr. Suwała. – medical sciences, contrary to appearances, are not exact sciences. They have more in common with the humanities. It is not without a reason that we talk about the art of medicine. Very often, when in contact with a patient, we see certain nuances that artificial intelligence may not notice. We often tell students that diseases do not read books. A patient may suffer from several different diseases, may have other diseases, may be genetically different, and suddenly it turns our that the disease which seemed simple, logical and precisely described, developed completely differently in the patient. Will artificial intelligence be able to combine all the components? Perhaps it will in the future, but I do not think it will happen within the next days, weeks, months or even years. I think it will be decades.

Technology will not replace medical doctors, but it can help them care for patients. In ultrasound examinations, it can evaluate images and suggest that the lesion requires biopsy or has a very low oncological potential. However, in order for her to do this, it must be taught how to do it. Moreover, it is the doctor who will ultimately decide whether to follow the algorithm's proposal or not. Medical doctors do not hide that artificial intelligence should and must be implemented into everyday clinical practice, as it can make the life of both doctors and patients easier. As an example, they say that patients do not always remember or talk about taking certain medications. However, AI, based on the analysis of Big Data (sets of data so big and complex that they require new technologies for processing) could indicate what medications the patient has recently taken and inform doctors about it.

Andrzej Romański

Scientists also point to the so-called artificial intelligence hallucinations. If we ask a question about a specific disease symptom, AI can find absolutely false information based on specific material invented by itself. If someone believes it too strongly, it will suddenly turn out that they are suffering from a disease that does not really exist.

Specialists from Collegium Medicum NCU are not the first in the world who decided to test ChatGPT. Artificial intelligence has passed the European exam in interventional cardiology and ophthalmology. However, it failed the orthopedics test. In this case, its knowledge was assessed at the level of a resident of the first year of specialization, i.e. very little experience. The exam in gastroenterology also turned out to be too difficult for the algorithm, and in Poland it failed in urology, endocrinology, and diabetology.

It is surprising that ChatGPT managed to pass the test in interventional cardiology as it is a very difficult specialization – notes Dr. Suwała. Maybe this is due to the fact that it is based largely on English-language guidelines, to which ChatGPT could have had better access. On the other hand, when we analyzed if language barrier could be a problem for AI, it seemed not. A French-language article described how ChatGPT performed on the same exam in French and English. The results were almost identical.

Scientists emphasize that artificial intelligence makes mistakes more often than doctors and will not replace them for a long time. However, they admit that patients have great trust in computers. – If we ensure education at the appropriate level so that people can critically approach the information provided to them, there is a chance that artificial intelligence will not kill them – says Dr. Suwała. – It may happen that while listening to ChatGPT, they will not contact a doctor in time and it will be too late for treatment.

NCU News

NCU News

Natural sciences

Natural sciences

Natural sciences

Natural sciences

Natural sciences

Natural sciences